More than 70% of critical application outages start at the data layer. Your revenue, reputation, and user trust hinge on a foundation that can—and will—fail.

Traditional manual recovery creates costly delays. Engineers scramble at 2 AM, but the damage is already done. What if your infrastructure could diagnose and repair itself autonomously?

That’s the promise of a self-healing architecture. It’s an automated framework that identifies issues and implements fixes without human input. This continuous monitoring tackles everything from hardware malfunctions to network disruptions.

The result? You dramatically cut downtime from hours to seconds. This transforms your data layer from a constant vulnerability into a resilient asset. Your team focuses on innovation, not firefighting.

This guide cuts through the theory. We’ll show you practical steps to build this resilience. You’ll learn how to stop reacting to common database errors and start preventing them.

Let’s build a foundation where data reliability is guaranteed, not just hoped for.

Fundamentals of Self-Healing Database Systems

Imagine your infrastructure diagnosing its own issues and applying fixes before you even get an alert. That’s the promise of autonomous recovery.

Understanding the Concept and Key Benefits

This capability isn’t magic—it’s orchestrated automation. Your data layer continuously monitors its own health. It then acts on predefined and learned scenarios.

The performance gains are real. Architectures that auto-recover slash mean time to recovery by up to 95%. This transforms hours of downtime into mere seconds.

You get several key advantages. Reduced downtime ensures continuous service. Major cost efficiency comes from automating routine fixes. Enhanced reliability stems from consistent, automated responses. Finally, user experience improves with fewer interruptions.

Essential Components for Autonomous Recovery

Six core components work together in this design. Monitoring sensors collect real-time performance data. A diagnostics engine analyzes this data to pinpoint root causes.

A decision-making module then chooses the optimal response. An execution framework implements the fix through automated scripts. A knowledge base stores solutions for future reference.

A feedback loop learns from each incident to improve the mechanisms. For example, connection pool exhaustion triggers an automatic scale-up. This entire process happens without manual intervention, boosting overall data reliability.

Organizations see a 60-80% drop in tickets needing human help within six months. You build resilience in from the start.

Proactive Failure Detection and Health Monitoring

What if you could spot trouble brewing in your data infrastructure 30 minutes before it crashes? Proactive monitoring gives you that power. It transforms guesswork into precise, actionable information.

Implementing Real-Time Observation Techniques

You can’t fix what you don’t see. Start by exposing health endpoints from every service. These endpoints give external monitoring tools a live feed of your system’s state and dependencies.

Application performance monitoring (APM) tools are crucial here. They track key metrics against dynamic thresholds. This reduces false alarms while catching real anomalies.

Your monitoring strategy must watch specific signals. Track query response times, connection pool use, and replication lag. Each metric tells part of your system’s health story.

Identifying Early Warning Signs and Anomalies

Early warnings often appear 15-30 minutes before complete failures. For example, replication lag exceeding 10 seconds signals write pressure overwhelming a replica.

Machine learning algorithms analyze these patterns. They predict potential failures based on metric combinations, not single breaches. This lets your automated responses act preventively.

The problems you catch early cost a fraction of the downtime they prevent. Proactive detection is your most powerful tool for reliability.

Leveraging Self-Healing Database Systems for Seamless Recovery

The true test of your architecture isn’t if it fails, but how it recovers. Seamless recovery means your services stay up even when underlying components don’t.

Automated Fault Diagnosis and Rapid Response

Automated diagnosis analyzes a failure signature in milliseconds. It matches the pattern against a knowledge base of known issues.

Your system then executes a precise decision tree. It identifies the fault, assesses the impact, and selects the optimal fix. This entire process happens before users notice any degradation.

For example, a primary node failure triggers an immediate, automated response. A synchronized replica is promoted within seconds, rerouting all write traffic. Your application experiences no data loss.

Utilizing Asynchronous Communication to Prevent Cascading Failures

Asynchronous patterns decouple your services in time and space. Components communicate via events, not direct, instant calls.

This design breaks the chain of cascading failures. If your database has a temporary glitch, write operations queue in a message broker. Your front-end application continues serving users from cached data.

Consider an e-commerce platform during a connection failure. The order processing service remains critical. It might disable non-essential recommendations but keeps accepting purchases.

This is graceful degradation in action. It ensures core functionality survives when a subsystem has a problem. Your overall resilience skyrockets.

Implementing Recovery Mechanisms and Resiliency Patterns

Your recovery mechanisms determine whether a minor hiccup becomes a major outage. You need patterns that automatically handle transient faults and isolate problems.

Adopting Retry Strategies and Circuit Breakers

Start with smart retry logic for temporary network blips. Use exponential backoff—wait longer between each attempt. This gives a struggling service room to breathe.

Your code must distinguish retryable from non-retryable errors. A timeout might deserve another try. An authentication failure does not.

Circuit breakers stop the retry storms. They open after too many consecutive errors. This design fails fast, protecting your application from wasting resources.

For example, block new requests if 50% fail in ten seconds. The breaker then tests recovery periodically. This state machine is a core resilience component.

Isolate critical components with the bulkhead pattern too. Separate connection pools prevent one problem from consuming all threads. Your entire system stays responsive.

Configure these mechanisms with discipline. Limit retries to three. Start backoff at 100ms. Set clear thresholds for your breakers. This design philosophy keeps small errors small.

Integrating Monitoring Tools and Automated Responses

If your alerts don’t trigger fixes, you’re just watching the ship sink. The real power comes from connecting your observability stack directly to automated repair scripts.

Your monitoring tools must do more than collect metrics. They need to execute recovery procedures the instant a predefined condition is met. This closes the loop between detection and resolution.

Effective Health Endpoint Monitoring Strategies

Every critical service should expose a health endpoint. This API returns structured data on connectivity, replication status, and dependency health.

Effective checks go beyond simple pings. They run representative queries and verify connection pool capacity. Load balancers then use this information to route traffic away from unhealthy instances in real time.

This process removes failing components before users ever see an error. It’s a foundational pattern for any resilient system.

Establishing Feedback Loops for Continuous Improvement

Your system must learn from every incident. Log the failure details, actions taken, and resolution time. Feed this data back into your decision-making algorithms.

Over time, patterns that needed human help become fully automated. Tools like Azure Chaos Studio let you test this by deliberately injecting failures. You’ll find gaps in your recovery process before a real crisis hits.

Continuous improvement means asking: can we automate that manual step? Can we detect issues faster? This feedback loop is what makes your architecture smarter and more reliable.

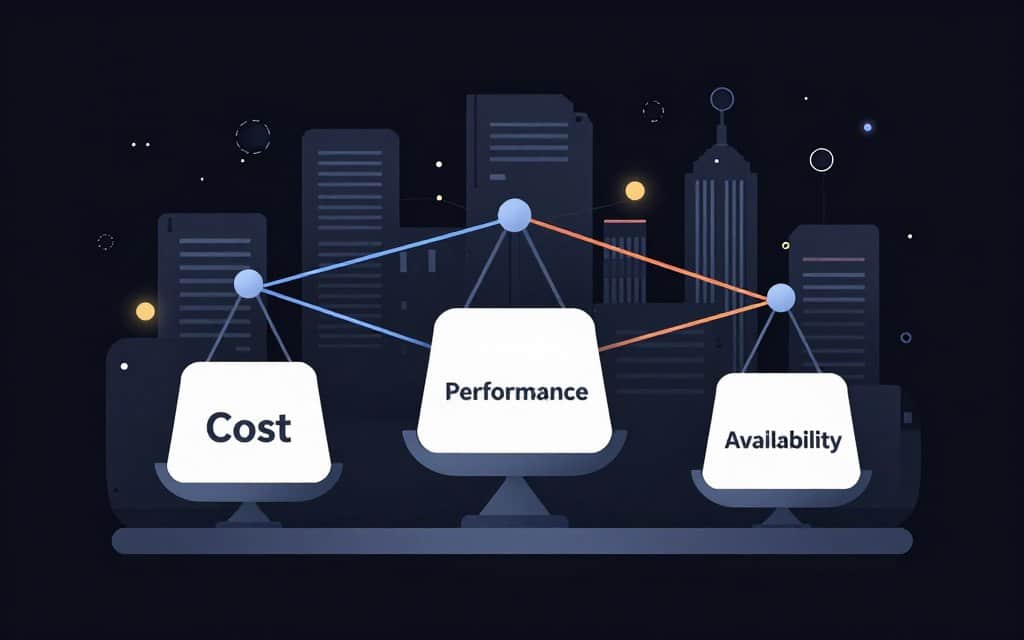

Balancing Cost, Performance, and High Availability

Every extra nine of availability you promise comes with a steep price tag and potential performance hits. Your strategy must align with what your workload truly needs.

Evaluating Trade-offs in Replication and Failover

Synchronous replication guarantees zero data loss but adds latency to every write. Asynchronous replication is faster but risks losing seconds during a failover event.

Multi-region setups provide ultimate resilience but double your infrastructure costs. Zone-redundant services often hit the sweet spot for many production workloads.

Optimizing Resource Allocation for Resiliency

Tier your resilience investments by data criticality. Mission-critical services get robust, multi-zone protection. Less vital data can use simpler, cheaper strategies.

Standby replicas consume resources even when idle. Calculate the revenue at risk versus the extra monthly cost. The right business case for high availability becomes clear.

Final Reflections on Building a Self-Healing Database Ecosystem

The journey to a resilient data layer isn’t about chasing perfection—it’s about building intelligent adaptability. This demands a fundamental shift in how you architect and operate.

Start small with automated monitoring. Then, progressively add components like circuit breakers. You’ll see measurable gains, like an 80-90% drop in downtime.

These solutions aren’t silver bullets. They add complexity and consume extra resources. Poor engineering can create more problems than it solves.

Your expertise remains vital for novel issues and strategic design. The future is hybrid intelligence. It combines machine speed with human judgment for ultimate data reliability.